It is designed specifically for extracting sequential data. It is a classification of NN to process temporary data. A type of ANN is Recurrent Neural Network (RNN). This connection doesn’t contain just one algorithm but a combination of complex algorithms that help us with advanced computation. To understand LSTM, we must start at the very root, that is neural networks.Īn Artificial Neural Network (ANN) is a structure of neurons connected. The most commonly and efficiently used model to perform this task is LSTM. The Sequence prediction problem has been around for a while now, be it a stock market prediction, text classification, sentiment analysis, language translation, etc. In this article, I hope to help you clearly understand how to implement sentiment analysis on an IMDB movie review dataset using Python. The Jungle Cruise operators would sometimes hang out with the Tahititan Terrace waitresses at parties like the annual employee Banana Ball.Upvote 3+ Long Short Term Memory is considered to be among the best models for sequence prediction. The ride passed by the Tahitian Terrace restaurant. Sometimes I worked on the Jungle Cruise ride. When I was an undergraduate student at the University of California at Irvine, I worked at Disneyland in Anaheim. For LSTM and TA systems, the X input values in each batch must be transposed.Ī DataLoader serves up machine learning training data. The DataLoader class has 9 other parameters but I almost never use those.įor some PyTorch models, such as a deep neural network, the batches can be used directly. The shuffle=True argument is important during training so that the weight updates don’t go into an oscillation which could stall training.

The drop_last=True argument means that if the total number of data items is not evenly divisible by the batch size, the last batch will be smaller than all the other batches, and will not be used. # serve up all data in batches of 3 itemsįor (bix, batch) in enumerate(train_ldr): Train_ldr = T.(train_ds,īatch_size=3, shuffle=True, drop_last=True) Alternatives include return values as a Dictionary or in a List or in an Array. The _getitem_() method accepts an index and returns a single item’s input values, and the associated class label, as a tuple. There are many alternatives, including using a pandas library DataFrame. I use the numpy loadtxt() function to reads numeric data. The _init_() method loads all the data from a training or test file into X (inputs) and Y (class labels) buffers in memory as PyTorch tensors. Self.y_data = T.tensor(tmp_y, dtype=T.int64) # CE loss Self.x_data = T.tensor(tmp_x, dtype=T.int64) space delimitedĪll_xy = np.loadtxt(src_file, usecols=range(0,21),ĭelimiter=" ", comments="#", dtype=np.int64)

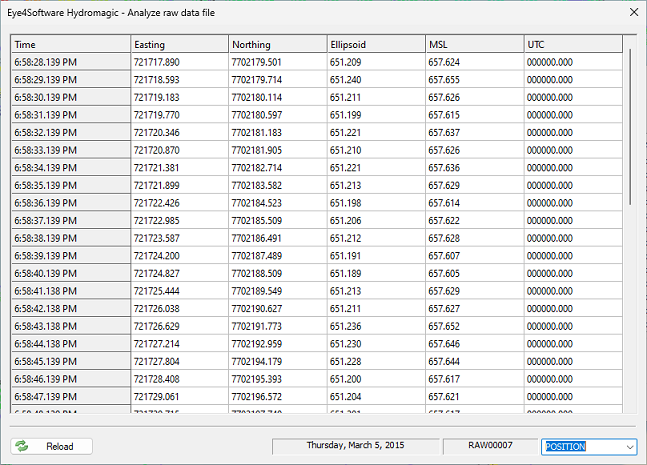

Here’s a Dataset definition for 20-item IMDB data:Ĭlass IMDB_Dataset(T.): You must implement a Dataset that’s specific to your data, but a DataLoader can be used as-is. A Dataset object is fed into a DataLoader object which can serve up the data in batches. Briefly, a Dataset loads data nito memory and has a special _getitem_() method that returns a single data item. My preferred technique is to use the PyTorch Dataset plus DataLoader appraoch. In addition to getting training and test data, another major challenge is serving up training data in batches. The last integer on each line is the class label to predict, 0 = negative review, 1 = positive review. Token IDs of 1, 2, and 3 are reserved for other purposes. For example, the most common word, “the” = 4 and the second most common word is “and” = 5, and so on. The integer values are token IDs where small values are the most common words. Reviews are padded with a special 0 character. The 20 is a parameter and in a non-demo scenario would be set to a larger value like 80 or 100 words. The reviews were filtered to only those reviews that have 20 words or less. My standard data for NLP experimentation is the IMDB movie review dataset.Ī major challenge is getting the raw IMDB data (50,000 movie reviews) and saving a training file and a test file. I’ve been working with LSTM (long short-term memory) and TA (transformer architecture) prediction systems for natural language processing (NLP) problems.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed